The most popular deep learning libraries

You might have heard of artificial intelligence, deep learning and neural networks, and wanted to get a path into this exciting new technology. This article will help you get a comprehensive overview of the tools and frameworks you can use to accelerate your impact and learning in artificial intelligence. It will help you understand what deep learning library you should use to accelerate your learning.

This article assumes your familiarity with basic deep learning concepts — if you need to catch up on those, the following Wikipedia article will help you get to scratch.

This is a walkthrough of the most popular deep learning libraries, examples of clever projects and implementations that have used the different libraries to create something awesome.

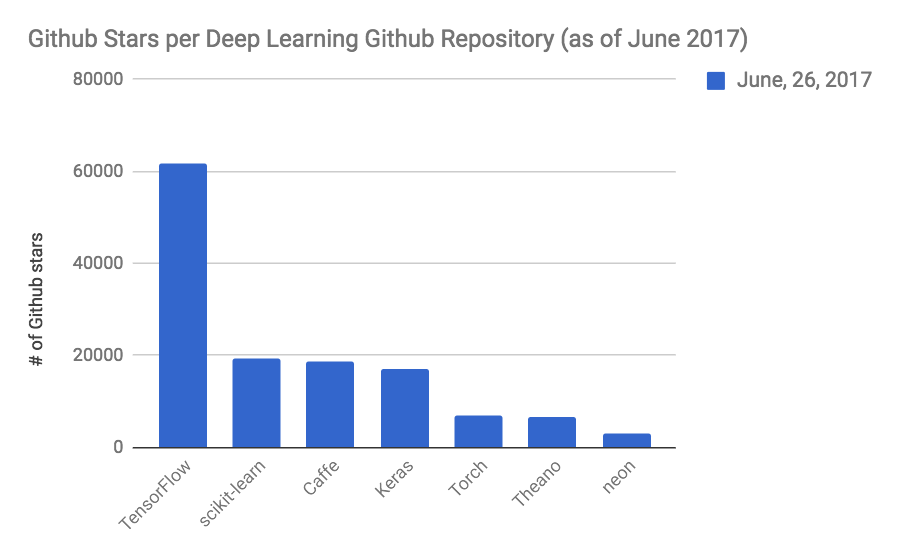

I’ve ranked the deep learning libraries in question by the number of Github stars their repositories have collected as of June 2017 — a great way to see how much traction these different frameworks have with programmers.

1- TensorFlow

Overview: TensorFlow is the open-source machine learning library developed by Google (and still used in both research and production level applications at the company). The library allows you to bring machine intelligence capabilities to all sorts of devices, from those equipped with GPUs to mobile devices such as the Raspberry Pi. With over open-source 6,000 repositories using TensorFlow, it has quickly become one of the most popular frameworks out there for those looking to build something with deep learning. TensorFlow is very accessible, with APIs for Python, C++, Haskell, Java, Go and Rust and a 3rd party package built in R.

Introductory Tutorial: Get introduced to TensorFlow with this official tutorial by Google by using TensorFlow with the famous MNIST dataset.

Use Cases: TensorFlow has become of the most popularized deep learning frameworks, and as such, it has seen a wide array of uses from powering cutting-edge machine learning work at different Silicon Valley companies to classifying cucumbers for farmers.

Resources:

- This Github repository TensorFlow-World contains a bunch of introductory tutorials with code compiled together to give you a better sense of how to do deep learning on TensorFlow.

- TensorFlow-101 contains tutorials on how to get started with TensorFlow in Jupyter Notebook with Python.

- This Github repository contains example code that will help you work through different analyses in TensorFlow.

How to get started: Get all of the documentation and installation instructions here, then start practicing and training deep learning models on different datasets! TensorFlow is one of the most popular and powerful deep learning frameworks out there: take advantage!

2- Scikit-learn

Overview: Scikit-learn is the versatile machine learning knife in Python, used for simple experimentation and iteration with different templated machine learning models. With modules like the MLPClassifier, you can easily bring deep learning approaches to your datasets and use the rest of the trusty scikit-learn ensemble (such as train_test_split) to validate and evaluate your model. You can also combine the scikit-learn interface with different deep learning libraries if you want to do more powerful analyses.

Introductory Tutorial: This tutorial runs through how to use scikit-learn as a deep learning library and a multilevel perceptron model to classify different types of wine. You can see how with a few lines of code, you can create a very accurate model with many variables.

Use Cases: scikit-learn is often the first go-to deep learning tool for people working in the Python data science ecosystem: it comes pre-installed with Jupyter Notebook and it comes with powerful functions that are already-optimized versions of essential deep learning functions. You’ll be able to quickly build together machine learning models, evaluate them, and split different use cases into either test or training sets.

Resources:

- This cheatsheet by Datacamp will help you with many of the essential functions in scikit-learn.

- Springboard has a tutorial that will teach you how to build a simple neural network with scikit-learn and its MLPClassifier module.

- This gentle introduction into scikit-learn can help you ease into this machine learning package.

How to get started: Use jupyter notebook, which comes with scikit-learn installed by default. Start training machine learning models, then move into Scikit Flow, an interface that combines the code you’d use in scikit-learn augmented with functionality from Google’s TensorFlow library (we’ll dive into TensorFlow a little bit later in this article).

3- Caffe

Overview: Caffe is a deep learning framework built by Berkeley’s AI Research department (BAIR) and sustained through the use of community contributors. It features speedy application of deep learning approaches, with the ability to classify up to 60 million images a day on a single GPU. It powers a variety of deep learning projects in machine vision, speech and more — with projects ranging from fully fleshed out applications to academic papers.

Introductory Tutorial: This tutorial built by Berkeley AI researchers will help you get up to speed with this powerful deep learning framework.

Use Cases: Caffe is used for a variety of academic research — this web page has a ton of examples ranging from image classification to training the classic LeNet model.

Resources:

- This blog post will help you get up to scratch with using Caffe and Python with a relevant classification example from Kaggle on how to distinguish between dogs and cats.

- This tutorial will help you with loading Caffe onto iPython Notebook and also with C++ implementations of the library.

- This tutorial will get you to understand how you can build a layer of a neural network within Caffe.

How to get started: Use the command line and get started with different use cases of Caffe. You can use this tutorial to get installation instructions across a whole array of different platforms.

4- Keras

Overview: Keras is an open source deep learning library for neural networks written in Python. Authored by François Chollet, the library was meant to be a quick and easy way to experiment with different deep learning models — as a high-level API written entirely in Python, the library is easy to debug and navigate. It supports both convolutional and recurrent neural networks and it is designed to be as intuitive as possible for users to grasp.

Introductory Tutorial: This introductory tutorial will run over how to get started with Keras — all the way from installing the package to training a model with 99% predictive accuracy on the seminal MNIST dataset.

Use Cases: Most of the time, Keras is used to build simple deep learning models as conceptual sketches: you would validate crude ideas using Keras, and get a first glance at whether or not you had a good idea for a deep learning architecture that can tackle a problem.

Resources:

- This course by DataCamp helps you dive deeper into Keras, even if you’re just a deep learning beginner!

- Here is a link to the official Keras documentation which allows you to get access to the inner working of the framework from the creators of it.

- If you’re more comfortable with the R programming language, you can use the R interface to Keras.

5- Torch

Overview: Torch is an open-source deep learning framework based on optimizing performance on GPUs based on the programming language Lua with an underlying C/CUDA implementation. It allows for the parallelization of neural networks across different CPUs and GPUs. Torch is used by a lot of organizations at the cutting edge of machine learning, from Google to Facebook. It has been extended for use in mobile settings, with the ability to perform on iOS and Android.

Introductory Tutorial: This 60 minute blitz into Torch will get you started and ready to use this powerful deep learning tool.

Use Cases: The deep learning library Torch is used for a lot of machine learning and deep learning research programs at leading companies such as Google, Twitter, and Facebook. Given its origins as Facebook Research’s default deep learning framework, you can be sure that it comes with all of the support it needs from one of the largest tech companies in the world.

Resources:

You’ll want to get started understanding Lua before you work with Torch. This handy guide will help you get up to scratch.

This Github repository contains a whole host of Torch tutorials.

The following blog article by Facebook Research contains a lot of related work done in Torch.

How to get started: Get the deep learning library Torch installed, then start running it on different deep learning problems. You might want to refer to the Facebook research blog for inspiration.

6- Theano

Overview: Theano is a deep learning framework built deeply into the Python data science ecosystem, with deep integration with the NumPy numerical computing library. You can use C to generate code as well to make it even speedier — and it is the default teaching tool used in deep learning founding father Yoshua Bengio’s lab.

Introductory Tutorial: This Theano tutorial on Jupyter Notebook will help you understand the nuances of the deep learning library Theano, and will get you up to scratch with different conventions within the library.

Use Cases: This forum will allow you to understand different problems and use cases Theano users create with the deep learning library.

Resources:

- This documentation will help you understand more about the deep learning library Theano.

- This blog post will help you understand the performance differences between Theano and Torch.

- Here is an introductory tutorial to Theano.

How to get started: Use the instructions here to get started with installing Theano.

7- Neon

Overview: Neon is an open-source deep learning platform built on Python that is committed to the most powerful implementation of deep learning possible, with a consideration towards simplicity.

Introductory Tutorial: Work with this Github repository which contains a Model Zoo that contains different scenarios of working with Neon.

Use Cases: This Youtube playlist walks through different use cases with Neon, including playing Pong, and speech recognition.

Resources:

- Learn how to do basic classification with the MNIST database and the deep learning library neon with this introductory-level tutorial.

- Use this video course to get you started in Neon.

- This compilation of Neon resources will help you learn the framework.

How to get started: Get the deep learning library Neon installed with the following instructions.