Where To Get Free GPU Cloud Hours For Machine Learning

An Introduction To The Need For Free GPU Cloud Compute

GPUs were once used solely for video games. Now, they power machine learning models around the world with their unique configuration and processing power. Getting free GPU cloud hours has become a need for many machine learning practitioners and hobbyists.

In brief summary, your traditional CPUs are good for complex calculations performed sequentially, while GPUs are excellent for many simple parallel calculations performed across multiple cores. GPUs take advantage of the fact that their hardware structure and architecture is meant to do shallow calculations in parallel faster than a CPU can do them in sequence.

That makes them the perfect fit to train deep neural networks. The new RAPIDS framework also allows us to extend this to regular machine learning work and to data visualization tasks. This has led to speedups that can take algorithms that normally take upwards of 30 minutes, and reduce them to speeds of 3 seconds.

How do we take best advantage of this scenario? Fortunately, there are many GPU cloud providers that are offering free GPU cloud compute time so you can run experiments and try out these new processes.

1 – Google Colab

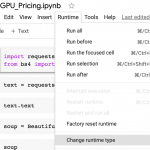

Google Colab offers you the opportunity to easily upload Python Notebooks into the cloud and interact with Github/Git to pull repositories to modify or to push work in Colab files to Github. If you have a Google Drive account, you can easily access your Colab notebooks in your Google Drive. You’ll be able to easily switch into GPU runtime mode by clicking Runtime on the top of the menu bar.

Specs:

- Free access to Tesla K80 GPU

- Up to 12 hours of consecutive runtime per day

- 12 GB of RAM

2- Kaggle GPU (30 hours a week)

Kaggle is a platform that allows data scientists and machine learning engineers the ability to demonstrate their capabilities with creating accurate models.

They offer 30 hours a week of free GPU time through their Kernels. The hardware they use are NVIDIA TESLA P100 GPUs. The intent of Kaggle is to offer them for deep learning, and they don’t accelerate workflows with other processes — though it’s possible you might try using RAPIDS with pandas and sci-kit learn like functions.

While the GPU time is offered for free, they do offer certain recommendations. You should, as with Google Compute, monitor when you’re using GPU time and switch it off when you’re not. Even if it’s monetarily free, you’ll want to be careful with the time you’re allotted. The limit of six hours of consecutive runtime means that you won’t be able to train complex state-of-the-art models that often take days to fully train.

Specs:

- Free access to NVIDIA TESLA P100 GPUs

- Up to 30 hours a week of free GPU time, with six hours of consecutive runtime

- 13 GB of RAM

3- Google Cloud GPU

For each Google account that you register with Google Cloud, you can get $300 USD worth of GPU credit. That can get you over 850 hours of GPU training time on their Nvidia Tesla T4. In practice though, you’ll want to try more powerful GPU instances with Google Cloud since you can get a baseline free with Google Colaboratory. You’d be able to train relatively powerful models in that time, or use it to practice machine learning work with RAPIDS. This tutorial goes over the setup of the GPU.

Note that when you set up the virtual machine, if you don’t turn it off when you’re not using it, you’ll still get billed, and you’ll get billed if you go past the $300 USD quota, so be careful to avoid unneeded charges.

4- Microsoft Azure

Microsoft Azure also offers a $200 credit when you sign up, which you can use for Azure’s GPU options. This blog post explains how you can get up to $500 a year in credits.

5- Gradient (Free community GPUs)

Tired of using Google/Microsoft infrastructure or want to try something new? Gradient offers free community GPU cloud usage attached to their notebooks. This blog post offers a more in-depth perspective on their community notebooks.

6- Twitter Search for Free GPU Cloud Hours

You can always keep an eye out for promo codes and other cloud providers offering free GPU Cloud Hours by looking at Twitter and searching for relevant keywords.

With the right search query, you’ll be alerted to the latest offerings. I’ll try to retweet a few if you want to follow my personal Twitter account.

7-An alternative: build your own machine learning computer with GPU

If you’re tired of more limited cloud compute constraints, from cost to execution time limits, one solution might be to go as far as building our your own machine. Your only constraint is the power cost, which can be higher than expected with these powerful machines.

Still, you’ll be able to fully control your configuration and the hardware you use. It can be very cost-efficient, since you can run your own machine 24/7 — and you can build your own machine learning GPU rig for less than $1,000.